"You really have to watch it with neural networks."

I have been trying to avoid thinking hard about artificial intelligence. It seems to change too much, too fast. I think: Oh, darn. Another whole new important maybe-dangerous thing to worry about, as if there isn't enough already.

I remember feeling the same way when I first started hearing about AIDS back about 1982. Maybe its a false alarm. If this is real it changes everything, I thought.

I have no illusions that humans are rational, reasonable, or reliable thinkers. Humans can pass a Captcha challenge and tell crosswalks from staircases, but we also believe fantastical religions and impossible conspiracies. We know all too well about human error. There are crazy people. There are sane people who believe crazy things. Artificial intelligence is susceptible to the same problems. But AI is efficient and labor saving and cheaper, and there are good things about it. Artificial intelligence is an oncoming train.

Michael Trigoboff retired as an Instructor of Computer Science at Portland Community College. He has a Ph.D. in Computer Science from Rutgers and had a successful career as a software engineer. I asked him if he could help me make sense of Artificial Intelligence.

Guest Post by Michael Trigoboff

There are two questions about the current situation regarding the current neural network implementations of AI, and failing to distinguish between them causes a lot of confusion. The two questions are:

***Will these neural networks become smarter than us?

***What social and psychological effects will the neural networks have on our society?

"Smarter" is a pretty vague term. Chess computers can now beat the best grand master we have, Garry Kasparov. Does this make them "smarter" than Mr. Kasparov? An autopilot can fly an airplane more efficiently than a human pilot. Is that autopilot “smarter" than a human pilot? A computer can add up a set of numbers faster than I can. Does that make the computer "smarter" than me?It all depends what we mean by "smarter". We could descend far into the weeds on that topic, in conversations suitable for stoned evenings in a college dorm. It can get very emotional; many people tend to not like the idea of machines smarter than they are. Science fiction is full of stories about malevolent smart computers; “Open the pod bay door, HAL”, etc.

But it only matters if we give these neural networks control of things that could hurt us. And this is true, regardless of whether they are, or can become, smarter that we are. We should not give neural networks that sort of control. We should not because we fundamentally do not know what we have created when we build and train one of these things. You can train neural networks, but you really have no idea what they have learned.I have heard this possibly apocryphal but illustrative story: a neural network was trained to recognize lung cancer in x-rays. It was shown millions (billions?) of x-ray images which were labeled either lung cancer or not lung cancer. Then it was tested against unlabeled x-ray images and it got the decision right at a very high rate.

Then the researchers did something very difficult: they picked apart how this neural network was making the decision. They discovered that, at that time, every x-ray image had text in one corner saying things like the patient's name, the date of the x-ray, where the x-ray was taken, etc. The neural net had figured out that x-rays taken in a hospital were significantly more likely to show lung cancer than x-rays taken in a doctor's office, and was basing part of its decision on that text.

You really have to watch it with neural networks. It's very difficult to tell what they are doing even when it seems like they are working correctly. Why is that?

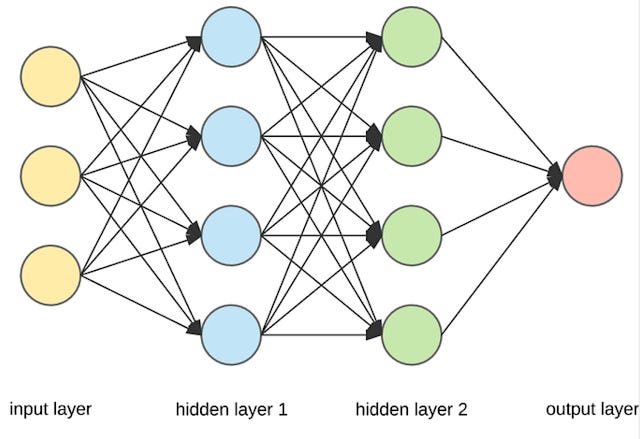

A neural network consists of many layers of simulated "neurons”. The image above shows a very simple neural network. The arrows represent connections from each neuron to neurons in the next layer, going from left to right. Each connection has a strength associated with it: a number between zero and one that specifies the strength of that connection.

The learning process for a neural network consists of giving it a "training set". That could be a few million pictures containing a cat, and a few million pictures with no cat. Every time the neural network thinks it saw a cat when it actually did, you "reward" the neurons that made that decision by increasing their connection strengths. Every time the neural network gets it wrong (either it didn't see a cat when there actually was one, or vice versa), you "punish" the neurons involved by decreasing their connection strengths.If the training has gone well, you eventually get a neural network that can reliably tell you if there is a cat in the picture. At this point, it "knows" how to identify a cat. But what does it know?

The neural network will actually consist of millions, if not billions, of simulated neurons, and a much higher number of connections between them. The "knowledge" gained from the training process will be nothing more or less than a huge gray mass of connection strength numbers, all of which are between zero and one. No one can look at that gray mass of numbers and even begin to understand how the neural network identifies cats.This is a huge problem with neural networks. You can't tell what they know, or what the limits of their knowledge are. You can't tell when something like ChatGPT will "hallucinate" and not only make up fictitious "facts”, but go on to cite fictitious scientific papers that support those facts. Everyone was surprised when ChatGPT tried to convince a New York Times reporter to leave his wife, because ChatGPT "knew" that the reporter loved it more. Neural networks are a classic example of a black box; we can see what it does, but we don't have a very good idea of how it does it.

Whether or not they are smarter than us, it would be a very dangerous to put them in charge of things like electrical grids or NORAD. I would not want a neural network, of whatever degree of "smartness", to decide whether or not to fire nukes back in response to what seemed to be an attack by a foreign adversary. Scenarios like that are best left to the movies.

Which brings us to the second question: social and psychological effects.

Neural networks are going to cause a new wave of automation and job elimination, and this time it is going to be white-collar jobs on the chopping block. Paralegals, accountants, pharmacists, and many others will see a significant reduction in demand for workers. It will affect people who write code; ChatGPT does a pretty good job, and has even written entire smart phone apps.

What will happen if there are far fewer reasonably good jobs available? A previous guest essay on the topic of AI proposed that people would live on basic universal income (BUI) instead.

I have serious doubts about this. Even if BUI were to be implemented at a relatively decent level of income, I believe that many people need a sense of purpose in their lives, a sense that they are wielding useful skills to contribute to the progress of society. We see so many "deaths of despair” in our de-industrialized areas; suicides and fentanyl addictions involving people who have lost the sense that they have a place in society. While I personally know a few people who would be happy to live on some sort of dole, I suspect that a lot of us have a Drive to contribute, and would not be happy living out our lives in Neutral or Park.

I don't know the answer to this problem; it's not my field of expertise. But I think it's a much bigger concern than whether the machines are going to become smarter than us.

I was very diligent reading the first four or five paragraphs on neural networks, but then the fog settled in. I think I need one of those fake brains to understand fake brains.