A computer scientist writes:

"Computers are not smart or conscious yet, and will not be in any foreseeable future."

Maybe I was fooled.

Last week I wrote about a conversation between a journalist and an Artificial Intelligence "being" named Sydney. Sydney was a combination of smart and silly in the immature way of an impulsive teenager, but it was conscious, I wrote. Or it simulated consciousness about as well as any human does, or as well as did my Golden Lab, Brandy. Yesterday I posted a comment by John Coster, who took a theological approach to defining consciousness. Today I post the comment by another computer professional.

Michael Trigoboff is a member of the generation that created computers. He has a Ph.D. in Computer Science. He worked in industry and then as a professor of Computer Science at Portland Community College. He recently retired. He is open about his exploration of the nature of consciousness with help from psychedelic medications back when he was young and looked like this:

Now he looks like this:

Guest Post by Michael Trigoboff

What is the nature of consciousness? Of subjective experience? How can we tell if someone or something else is conscious?

These questions have puzzled and bedeviled philosophers for millennia. In our current era, philosophers refer to this as "the hard problem", meaning that they do not have even the first clue of an answer. The best analysis I have seen focuses on asking "What is it like?" to be a person, an elephant, etc. There are no clear answers to that question either. Apparently, what it's like to be a philosopher of consciousness includes a large component of frustration.

And now we have some new AI software: ChatGPT and Bing/Sydney. These new “large language models” behave as though they are manifesting sentient consciousness. They pass the Turing Test with flying colors. But it's way easier to convince a human that they are communicating with a conscious being than you might think.

In the mid-nineteen sixties, an MIT researcher named Joseph Weizenbaum created a program he called ELIZA. This program mimicked a Rogerian psychiatrist; it was built to reflect what you said back at you in that psychoanalytic style.

If you said to ELIZA, "I am feeling sad today", it would respond, "So you say you are feeling sad today". It did this through a small set of very simple rules, like substituting in the sentence the phrase "So you say you are feeling" for the phrase "I am feeling".

ELIZA was neither conscious nor even very capable of carrying on a normal conversation. But if you stuck to the kinds of things you would say to a psychiatrist, it did a reasonable job of simulating its end of that sort of Rogerian interaction.

Once Weizenbaum finished writing ELIZA, he wanted to test it. This was back when people had secretaries, and he asked his secretary to talk to it. She started conversing with it and then asked Weizenbaum to leave the room because she had something personal she wanted to discuss with ELIZA. It apparently looked like free psychoanalysis to her.

If something as simple and dumb as ELIZA can fool someone into thinking that it's a conscious sentient being, it's not surprising that the new Bing or ChatGPT (which are much more complex and capable) can do it.

Bing and ChatGPT work by analyzing huge quantities of text from the Internet into next-word probabilities. Given a sequence of words, what's the most probable next word, based on that analysis? There's no consciousness behind that process, and these chatbots don't "know" anything. They just string word after word together based on the most probable next word.

It's amazing that a process this simple can look so much like a conscious sentient being; it's just a supercharged version of autocomplete. Large language models like this have been referred to as "stochastic parrots". What you're getting is nothing more than a probability-based distillation of all the sequences of words that the LLM was trained on.

We’re a very long way from reproducing anything like human intelligence. A lot of what is called AI these days (e.g. cars that drive themselves) might be more accurately described as Artificial Insects. Ants can “drive” themselves to and from their nests. Bees can do it in three dimensions.

Consider this: a Turing Machine is a little mechanism that moves around on a long tape, reacting to data recorded on the tape. Turing machines are important because they are mathematically equivalent to computers but are simple enough to be useful in proofs about what computers can and cannot do.

A ribosome is a cellular mechanism that moves around on a long tape (mRNA), creating proteins encoded by data on the “tape.” Ribosomes and Turing Machines seem pretty similar to me.

There are ~37 trillion cells in a human body, and ~10 million ribosomes in a human cell. Which means we each contain ~3.7 * 1020 computer equivalents running in parallel. And that’s just the ribosomes. Human intelligence and consciousness seem to be phenomena that emerge from that complexity.

The idea that we might produce something equivalent from even 10,000 computers running in parallel strikes me as unlikely. There’s a complexity barrier standing between AI and its goal.

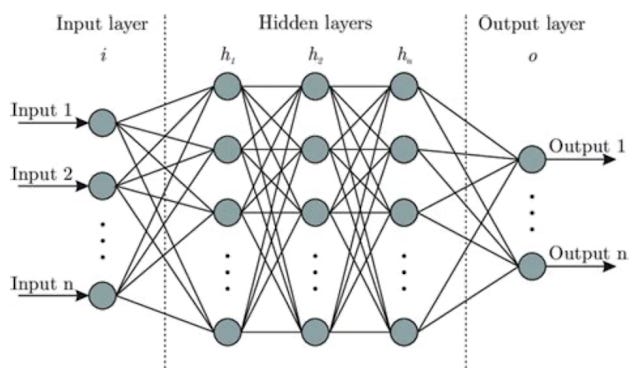

The “neural networks” that power the current version of AI consist of layers of simulated neurons that connect to each other in a simple and unified way. Think of something like the diagram below, but with millions or billions of the simulated neurons.

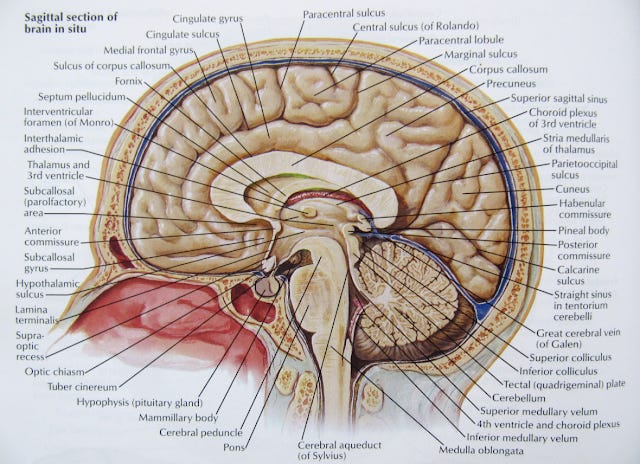

This is nothing like the way the neurons are organized in a human or biological brain. The brain has distinct sub-organs and nuclei, all wired together in an amazingly complex way. Some people have said that a human brain is the most complex object that exists in this universe. Its organization makes the current neural networks look like simple toys by comparison.

We absolutely do not understand how the human brain functions. The AI neural networks only model the actions of simulated neurons hooked together in very simple structures. The neurons in human brains are wired together with a complexity that's beyond our ability to understand. And that's just the neurons. There are smaller cells in the brain called glia; they outnumber the neurons by orders of magnitude, and no one knows what their function is.

There is a small worm called C. elegans. Its brain contains exactly 302 neurons, and scientists have mapped all of their connections to each other and to the rest of the worm. Here's a description from the abstract of a scientific paper:

With only five olfactory neurons, C. elegans can dynamically respond to dozens of attractive and repellant odors. Thermosensory neurons enable the nematode to remember its cultivation temperature and to track narrow isotherms. Polymodal sensory neurons detect a wide range of nociceptive cues and signal robust escape responses. Pairing of sensory stimuli leads to long-lived changes in behavior consistent with associative learning. Worms exhibit social behaviors and complex ultradian rhythms driven by Ca2+ oscillators with clock-like properties

No one knows how those 302 neurons are capable of producing this complex repertoire of behaviors; glial cells may be involved, but no one knows what their role might be.

To think that simply wired networks of large numbers of simulated neurons are going to be able to replicate, much less surpass, human intelligence is a combination of hubris and gullibility. It’s apparently easy for some folks to talk to ChatGPT or Bing/Sydney and come away thinking that they were speaking to something that was conscious; they have drawn an understandable but erroneous conclusion from that experience.

I was an AI researcher in the nineteen seventies. I finally left the field of AI firmly convinced that attempting AI was the appropriate punishment for committing the sin of pride of thinking that we could reproduce anything like human intelligence and consciousness with our current kind of digital computers.

Each of us experiences our own consciousness. This is the only way we can have knowledge of the presence or absence of consciousness. We cannot, barring very unusual circumstances or high doses of psychedelic drugs, directly experience the consciousness of another person. We are left with having to draw conclusions from, and generalize from, our own experience of consciousness.

Given that the only thing in this universe that I can verify the consciousness of is myself, and I experience myself as conscious, what reason would I have for concluding that anything else isn't conscious? Based on that thought, I believe that everything in this universe is conscious, although at various different levels depending on (perhaps) how complex that particular thing is.

Consciousness seems to arise, somehow, from complexity. The complexity of even the most complex current example of AI is still enormously less than the complexity of the human brain. They are not smart or conscious yet, and will not be in any foreseeable future.

That doesn't mean we cannot create AI software that does useful and potentially scary things for us. Just yesterday I heard a podcast about how neural networks have been trained to fly fighter planes in combat, and do it better than human pilots. That doesn't make them conscious or intelligent. Your thermostat keeps your house at a constant temperature; it's not conscious either.

Great article. Current AI software could do a better job of running the USA than the current US Congress.